SMODO – stop motion animation system

The idea behind SMODO was to bring stop-motion-animation to all enthusiasts.

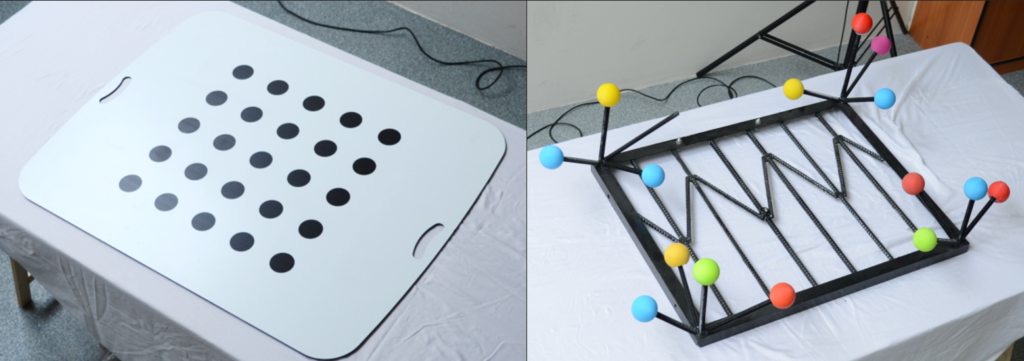

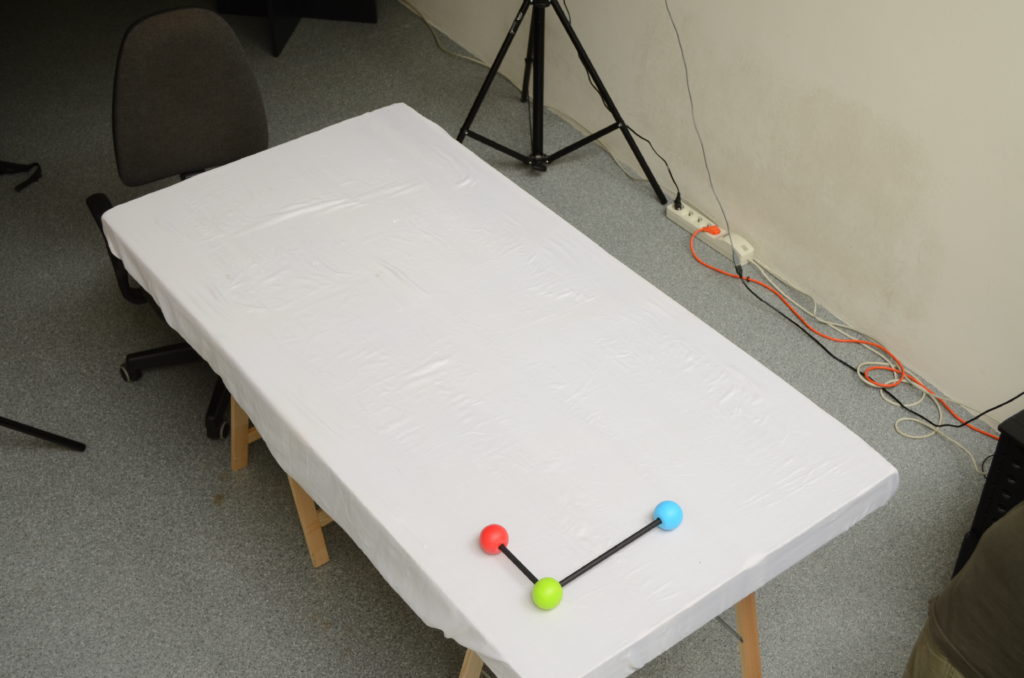

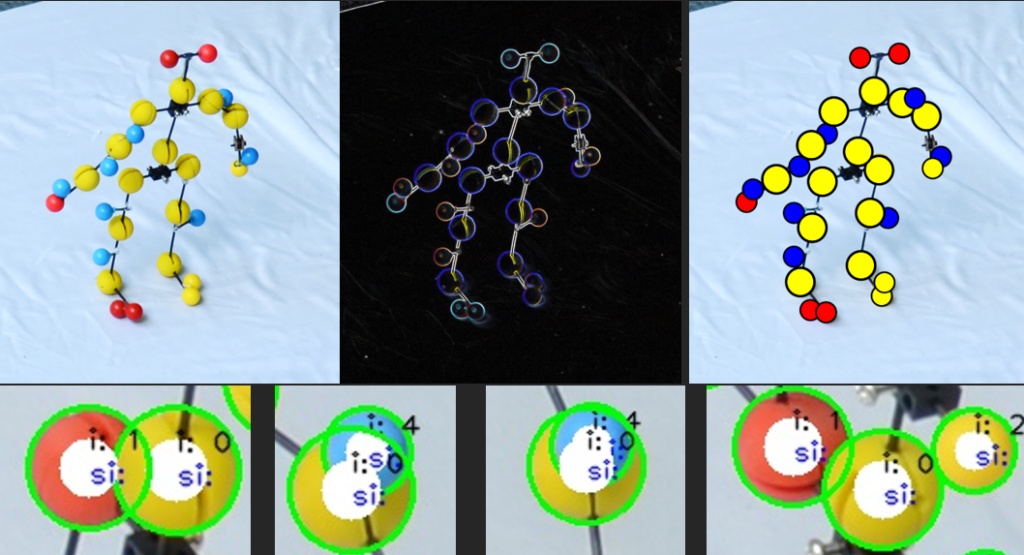

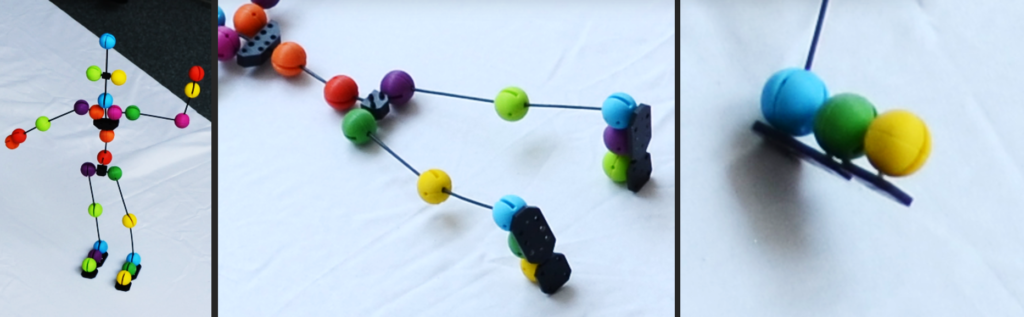

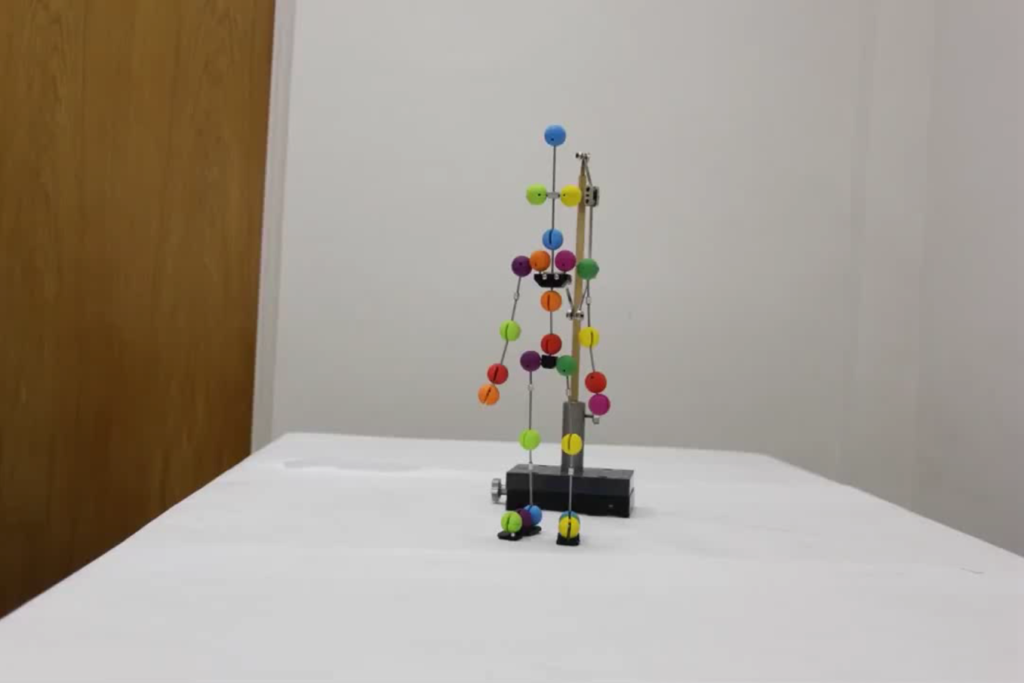

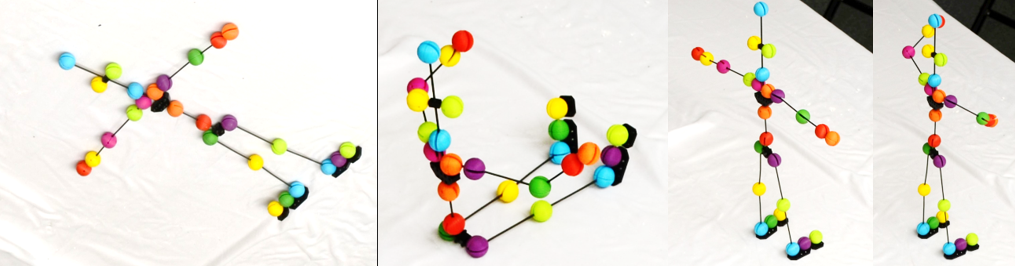

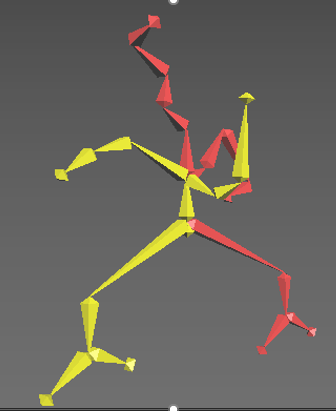

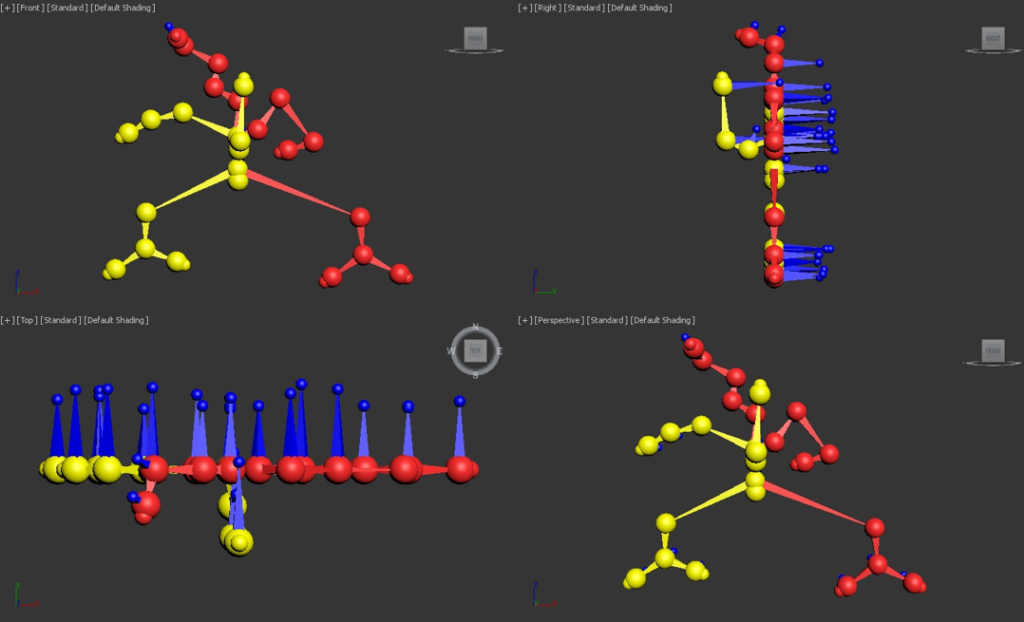

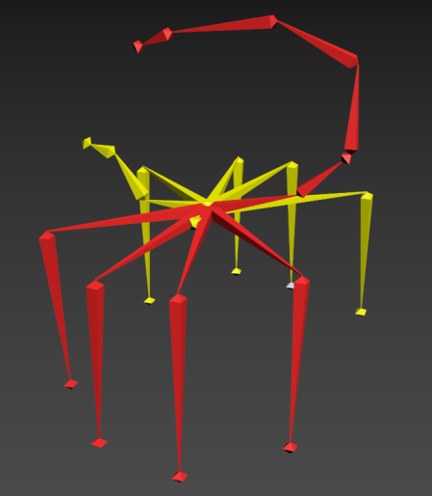

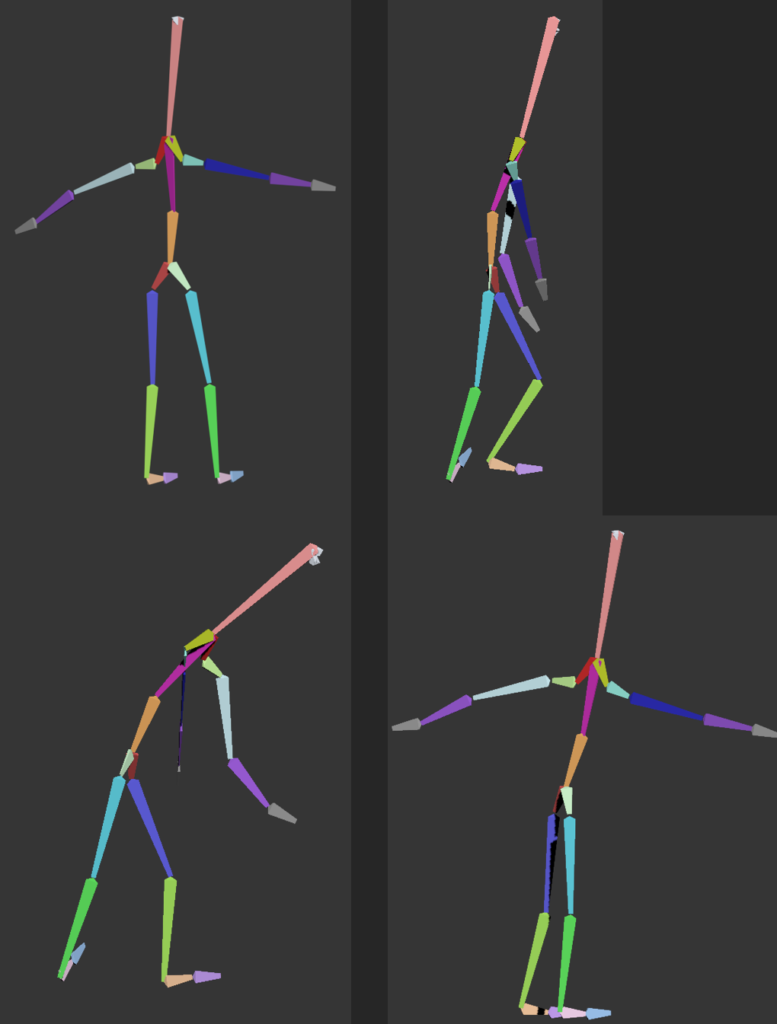

Instead of using typical, hand-made puppets (which are very expensive and tend to break quickly), in this system only simple puppet-skeletons with optical markers in every joint are used. Photogrammetric tracking system allows for obtaining 3D positions of those markers and spatial configuration of the skeleton. This information is transferred to 3D animation software (3dsMax, Maya, Blender) in which puppet’s body is modeled.

Movement of the skeleton is used for animating this body. Such system is aimed at students and hobbyists of animation, which cannot practice using professional puppets due to their cost. SMODO was presented at the biggest animation fair in Annecy where it got a very positive feedback.

My role in the project: 3D processing expert, software architect, leading programmer

| Task | Description |

|---|---|

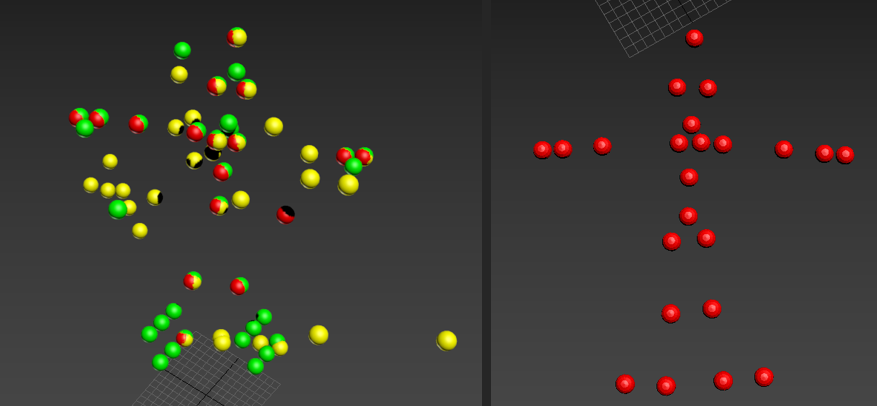

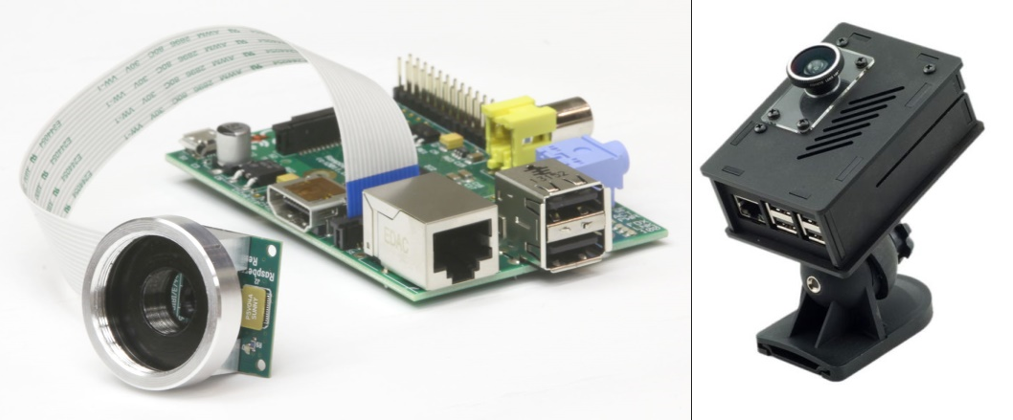

| Spearheaded the architecture of SMODO | Created the full hardware / software system architecture, composed of: – physical cameras setup, – calibration units, – cameras control (utilizing RaspberryPi 3) – photogrammetric calibration of cameras system, – identification of spherical markers (color-based) and their precise position on images, – photogrammetric reconstruction of 3D coordinates of markers (colored spheres), – skeleton pose calculation, – control the system from 3dsmax / Maya / Blender, – transferring skeleton pose to the skeleton model in 3D software. |

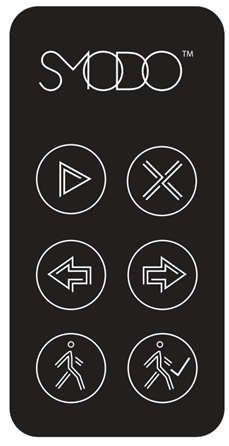

| Led the team of mechanical, electronic and software engineers | Coordinated the multidisciplinary teams. – mechanical engineers worked on the construction of skeletons (most important the joints), tools to allow end users cut the connecting rods to required length, calibration units etc., – electronic engineers worked on the cameras synchronization, cabling, special keyboard (with dedicated keys triggering actions), – software engineers worked on implementation of software modules which were mentioned above. |

| Developed plugins for Autodesk 3dsmax / Maya | Implemented said plugins to communicate with the system controller bidirectionally. 3dsmax / Maya could be used as control interface, but most important was receiving information about skeleton pose and applying to the 3D model in the software. |

| Implemented object localization using neural network and YOLOv3 model | Pure image-processing methods for recognizing the markers (by color) were failing due to low quality of images taken by cameras. Additionally, the color recognition was heavily affected by lighting conditions. It turned out that training YOLOv3 model using annotated images collected with various lighting conditions allowed to obtain much better results. |

| Technology | Purpose |

|---|---|

| C++ | Camera control, image processing, photogrammetric algorithms, calibration algorithms, synchronization, Autodesk 3dsmax / Maya plugins. |

| Python | Calibration control, Blender plugin, neural network training. |

| Theia SfM | Photogrammetric calibration, bundle adjustment. |

| PyTorch | Training of YOLOv3 model for detecting and identifying skeleton markers. |

| Autodesk 3dsmax, Maya, Blender | 3D model rigging, final animation. |